|

11/9/2022 0 Comments Pca example problems

Hence, the sampling errors of the principal components must be taken into account. However, the sampling error of the correlations will by necessity affect the calculation of the principal components, which are not merely a strict summary of the patterns but a statistic with associated sampling properties in the same way as the correlations they are based on are (Morrison 1990). In the example, when N = 200 the mean correlation is around 0.03. Indeed, in all cases where something is estimated, the estimated value will deviate from the true value to some (hopefully) small extent and this difference will vanish with increasing sample size.

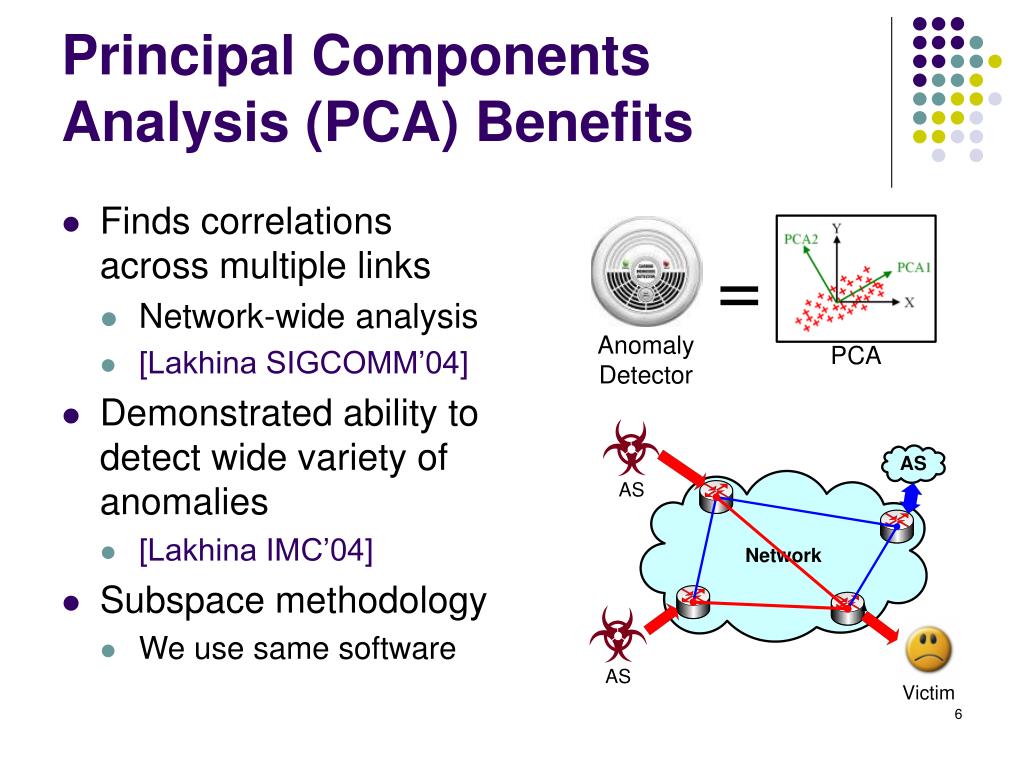

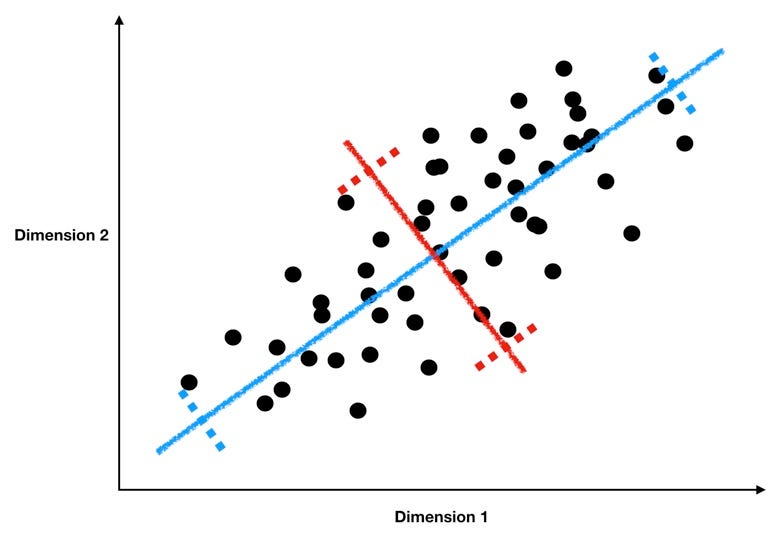

Thus, there is no pattern apart from that created from sampling error alone. The problem with the example in Figure 1 is that the correlation matrix is a sample correlation matrix based on a sample size of N = 20, while the true correlations are all set to 0.0 (for details see Appendix 1). This makes a lot of sense and is not a problem per se.Ī sample correlation matrix, with associated eigenvectors (PC-loadings) and eigenvalues, and a correlation with the scores from PC1 and an ecological variable. This is a standard procedure to handle complex data in a more easily understood way. In this case, it can be inferred that size is negatively correlated with the ecological variable. We can then, for example, put this into an ecological context, and rather than correlating each trait with the ecological variable of interest a new variable is created from the trait loadings (the sum of the products of the loadings and the individual values creating individual PC-scores): In this example this is a size vector and consequently only one correlation is made using the PC-scores. The second principal component accounts for 25 % of the variation and can be interpreted as a shape factor describing the size of trait 2 in relation to trait 3. The PCA shows that the first principal component (PC1) accounts for almost 44 % of the variation and based on the trait loadings on the first principal component can be interpreted as a size factor. One example is given in Figure 1 where four variables, let us say morphological measurements, are correlated to various degrees (the number of traits is arbitrarily chosen to for illustration only). One very common solution is to try to reduce the dimensionality of the data into fewer independent variables that take the interdependence of variables into account, for example, by a principal component analysis (PCA). However, these variables can be correlated to each other to various degrees, which means that the tests are not independent and the researcher can drown in a multitude of tests that are not easy to interpret. One approach to handle this is to simply test variables one by one. PCA can be very useful but great care is needed to avoid spurious results.Ī common problem in evolutionary biology is that a researcher has a large number of measurements of various variables that might be interpreted for the understanding of, for example, ecological patterns or multivariate selection. I review a few simple test statistics appropriate for testing PC's and use a real-world example to illustrate how this can be done using randomization tests. Robustness of the PC's increases with increasing sample size but not with the number of traits. I give a number of examples to illustrate the potential problems with PCA. PC-scores calculated from nondistinct PC's have very large standard errors and cannot be used for biological interpretations. Furthermore, the loadings of the vectors need to be larger than expected by random data to be useful in the calculation of PC-scores. Tests are, therefore, needed to discern real patterns from illusionary ones. Sample correlation matrices will always result in a pattern of decreasing eigenvalues even if there is no structure. This is a fundamental assumption of PCA and, thus, needs to be tested every time. However, the different components need to be distinct from each other to be interpretable otherwise they only represent random directions.

Principal components analysis (PCA) is a common method to summarize a larger set of correlated variables into a smaller and more easily interpretable axes of variation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed